What are image-to-image translation AI techniques?

Image-to-image translation is a technique in AI art generation that involves transforming one type of image into another. It can be used to create a wide range of artistic effects, such as turning a black-and-white photograph into a color image, or converting a daytime photograph into a nighttime scene.

There are several different techniques for image-to-image translation, including conditional generative adversarial networks (cGANs), pix2pix, and CycleGAN.

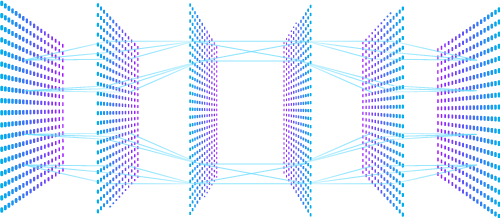

cGANs are a type of deep neural network that use a generator and a discriminator to transform an input image into an output image. The generator takes the input image and produces an output image that is then evaluated by the discriminator. The discriminator determines whether the output image is real or fake, based on a set of training images. The generator is then trained to produce output images that are as realistic as possible, and the discriminator is trained to correctly distinguish between real and fake images.

pix2pix is another image-to-image translation technique that uses cGANs. It was developed by researchers at UC Berkeley and is particularly well-suited for transforming input images that are structured, such as maps, floor plans, or sketches. The generator in pix2pix is trained to produce output images that are as similar as possible to the corresponding input images. The discriminator is trained to distinguish between the generated images and the ground truth images, which are the desired output images.

CycleGAN is a newer technique for image-to-image translation that uses an unsupervised learning approach. Unlike cGANs and pix2pix, CycleGAN does not require paired training data, meaning that it can learn to translate between two types of images without having to explicitly match them. Instead, it uses two generators and two discriminators to translate images from one domain to another. The generators are trained to produce images that can be translated back into the original domain, while the discriminators are trained to distinguish between the generated images and the real images.

Image-to-image translation techniques can be used to create a wide range of artistic effects, from transforming photographs into paintings or sketches, to creating digital designs and patterns. They can also be used in scientific and engineering applications, such as creating maps or simulating changes in environmental conditions.